The role of AI weapons in Minneapolis is a wake up call for America

Want to challenge the influence AI has on our democracy in the hands of billionaires?Join the Offline Underground

I.

What kind of AI should everyone be talking about? Is it AI “agents” that will “supercharge” your “enterprise” “productivity”? Or AI agents that build their own social media, where they can pretend to start religions, and hide from humans? Should we be talking about the AI agents that will steal our jobs? Or chatbots that fool our kids into thinking they are in love? Or worse, suicidal?

How about AI agents that help our government wield an invisible war against us?

II.

The most important AI tools in America — the ones everyone should be talking about anytime someone mentions the word AI — are neither agents nor chatbots. They are the complex, militarized tools DHS is building with Palantir and deploying in Minneapolis and cities across the country. These applications are undermining our democratic foundations and serving as engines of political vengeance — determining who is a domestic terrorist, who deserves deportation and punishment, and who is likely to be on their couch when the government barges in through the front door.

But few will tell you that. That is because the AI industry has billions in marketing money to spend, hundreds of millions to lobby, and a tight grip on policy under this administration. They are interested in selling you a story where AI leads to economic and personal salvation — or American re-industrialization — in order to protect their investments and make unimaginable profits. These are the narratives I was forced to push endlessly while working for big tech CEOs.

They do not want you to know what AI looks like in the real world. They do not want you to look at the ruins of Gaza, or the fiberoptic webs of Lyman, Ukraine. They do not want you to read the user guide of Palantir apps used in Minneapolis.

They don’t want you to know that AI is everywhere so that you can train big tech’s machine learning models, so corporations can automate your work, set prices for you, discriminate against you — and potentially allow the government to target you.

They don’t want you to notice that every hour, and all around the world, AI tools made by Silicon Valley are being used to kill and take away people’s rights — and with little accountability, regulatory oversight, or public understanding.

There is some debate about AI models like Claude threatening software engineering jobs — or worse, Grok undressing women and minors — but there is little mainstream discussion regarding the DHS’s AI platforms, which will soon be collecting lists of Trump’s critics from big tech companies, lists of Jewish students at universities such as my alma mater, and potentially, the protected health and social security information of the entire nation.

III.

Following the brutal killing of two citizens of Minneapolis by ICE, the U.S. government was quick to smear the dead and call them terrorists — shamelessly conceding that the “War on Terror” had been flipped against the American people. “Any technology that we build to harm, repress, and annihilate other people can always circle back around and be used to do the same to us,” Irna Landrum, a civil rights advocate, told Tech Policy Press in a podcast after the publication published her important testimony from the ground in Minneapolis: How ICE uses AI to automate authoritarianism.

If you followed the media narrative, the situation in Minneapolis seemed more like the late-stage War in Afghanistan than a typical Minnesota winter. Videos showed green smoke from chemical weapons as ICE stormed neighborhoods, kidnapping people in one of the strongest immigrant communities in the country. New York Times reporter Thomas Gibbons-Neff, who served as a Marine in Afghanistan, made a thorough comparison of the ICE weapons used against Minneapolis residents and battlefield equipment, examining an arrest in the city that was recently ruled unconstitutional by a federal court judge.

As in the final days of Afghanistan, Palantir’s AI tools were in the middle of the action, coordinating the “full lifecycle” of deportation operations for ICE ever since the September roll-out of their new platform, ImmigrationOS. These tools helped ICE code the violent rhetoric of this administration into the real world, helping determine who is deserving of justice and constitutional rights, and who is not.

In the time Palantir has worked for the DHS, immigrants were discriminated against for deportation based on their skin color, and even for protected free speech activities, like an autism awareness tattoo. Palantir’s platform could even be behind an alleged database of “domestic terrorists,” which an ICE agent revealed to a protester as he tried to scan their face with a facial recognition tool, in a video from Maine that has now gone viral.

Within the DHS, the enforcement of ideological prejudice has become Palantir’s trade. Palantir’s “ontology,” which defines what represents what in a digital system (who is a terrorist, who is a suspect, who is a target or a threat) — paired with an administration with a total disregard for truth, equality, or fairness — has created an existential threat to our democracy and rule of law. This “ontology” exerts and automates the worldview of the Trump administration domestically by force, whether it is on-the-ground in operations by ICE and CBP, or bureaucratically through fraud investigations and funding purges.

The department of Health and Human Services is currently using Palantir to enforce executive orders 14151, ”Ending Radical and Wasteful Government DEI Programs and Preferencing,” and 14168, “Defending Women From Gender Ideology Extremism and Restoring Biological Truth to the Federal Government.” These orders led to waves of layoffs and ended $3 billion research from the National Institute of Health, Centers for Disease Control, and National Science Foundation. They also led NASA HQ to direct the removal of any mentions of equity, inclusion, accessibility, indigenous people, environmental justice, underrepresented groups of people, LGBTQ issues, or topics for women from its website.

Although this might seem a minor detail, any fair digital governance system would account for all of these themes and issues in the design of an instrument of responsible statecraft. In a just world, Palantir’s claim to support Western democracy while undermining core principles of such democracies — like the concepts of equity or women’s rights — should lead to questioning and accountability, and regulation. But instead, Palantir’s software continues to help the government grow the list of targets within the sights of AI weapons in America. A list which has expanded dramatically since I’ve started speaking out: from immigrants and Palestinians, to foreign student protesters and op-ed writers, to Americans who are protesting their constitutional rights.

Yet, after messy, militarized operations that pitted the latest, high-tech war tools against the citizens of Minneapolis — the government is making a partial retreat, withdrawing 700 of the approximately 3,000 federal agents from the city while pushing towards the suburbs. Even Palantir is making ludicrous attempts to distance itself from ICE. "If you are critical of ICE, you should be out there protesting for more Palantir,” CEO Alex Karp said in his last earning call.

However, Palantir should be inseparable from the legacy of ICE, especially since it took on the full “lifecycle” of immigration enforcement, and brought its engineers to the front lines. More importantly, ICE should be inseparable from the legacy of AI. As one of the world’s best-funded, most politically-notable, and prolific deployer of AI tools, ICE embodies the ultimate promise behind this technology thus far: empowering and enriching billionaires at the expense of our privacy, security, and human rights.

At what might prove to be a pivotal point in history, Minneapolis showed the administration that community, peaceful resistance, and a particularly disciplined kind of civil disobedience are more powerful than the wanton application of violence, surveillance, and discrimination by ICE, Palantir, and the Trump administration. They proved that, although we still might be under siege by these forces in cities around the country, Americans are ready to challenge the criminals in power: the billionaires and government officials who are propped up by big tech, mass surveillance, incarceration, resettlement, and war.

IV.

As the tech stock market tumbles, more and more people are coming to the same realization that shocked me last year when I left the tech industry. The realization that, although we have trouble articulating the value of AI for our economy and culture, we can easily pinpoint the many harmful, if not existentially-threatening effects AI has across many aspects of all of our lives. In fact, we might have decades of work ahead of us to undo these harms. Tech journalist and author Cory Doctorow refers to AI as the “asbestos” of our time.

Exploitative AI algorithms are the reason people are addicted to their screens, the reason we are losing our ability to focus and think critically. AI is fueling wars and mass resettlement projects that never seem to end. AI surveillance is dismantling our constitutional rights, harming immigrants, destroying our workplaces, our educational experiences, and threatening the value of our labor.

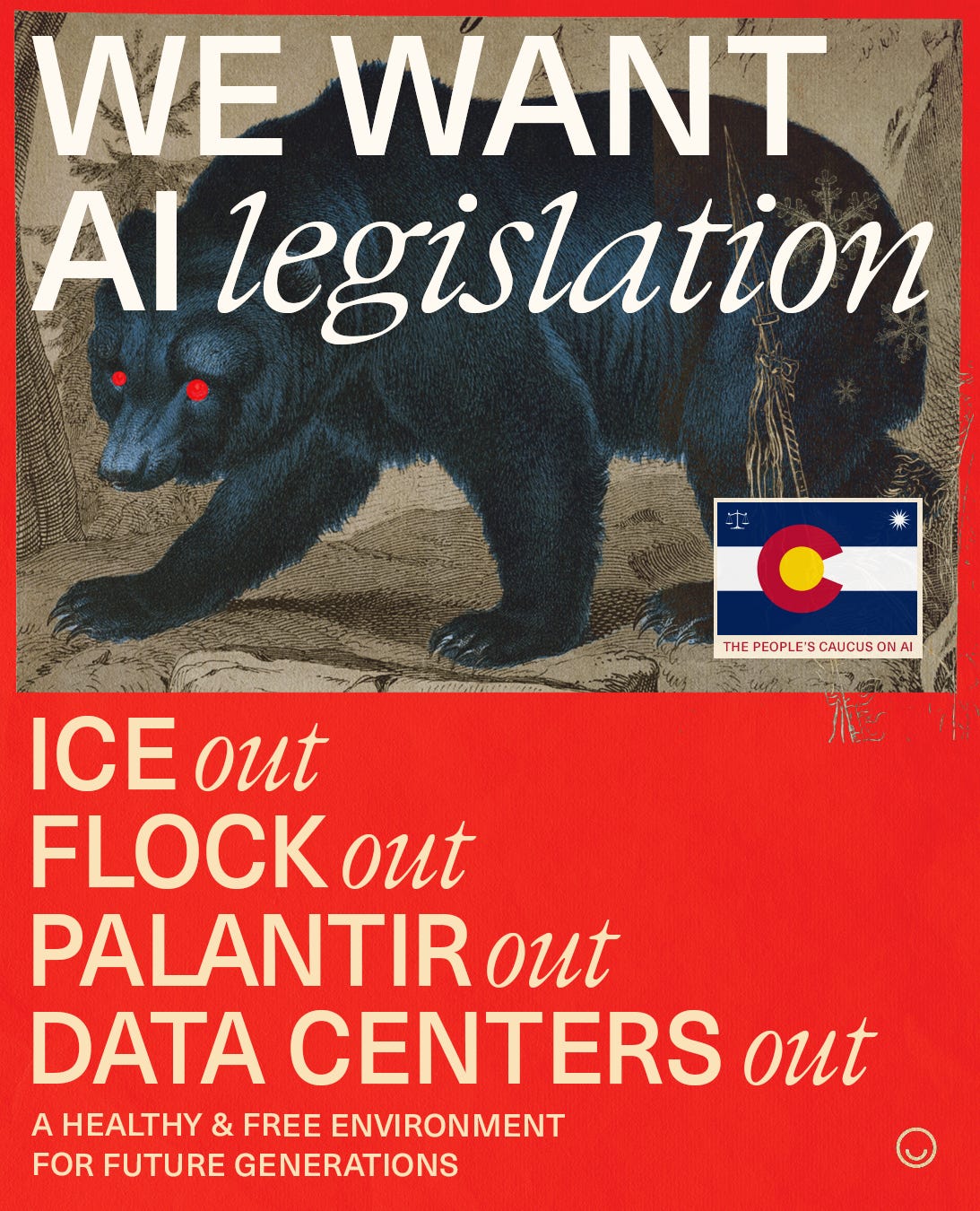

Finally, the data centers that are fueling this economy are polluting our communities and leading to explosive demand for power and water at a time when we contend with historic droughts and irreversible climate change.

Using any social media platform today — from Google to Facebook to Instagram to X to Reddit — feels profoundly unethical, as nearly all have led us into this world — by abusing our data for the training of AI models, helping ICE and the IDF target people, and furthering the aims of a genocidal project in Palestine. The DHS is now exploring ways to use the ad-tech behind these social platforms to help track the location of targets and support investigations.

In Denver, we have been closely watching events unfold in Minneapolis and preparing for the worst: another prolonged assault on a sanctuary city by ICE using advanced military strategies, weapons, and AI targeting tools. We also have to prepare for strange, militarized operations, such as an example Landrum shared in her interview with Tech Policy Press: where ICE agents lured protesters by honking repeatedly (a signal used by protesters to indicate an ICE raid) in order to trap them and scan their faces for a database.

We are also fighting AI surveillance across various other fronts. There are major decisions regarding AI legislation, data centers, and Flock cameras and drones across the state this month — and we’ve taken up the fight with union organizers, journalists, activists, researchers, and those who are ready to make a difference.

The winter of AI has begun, and we are launching and supporting various projects to help uncover the hidden architectures of AI exploitation.

We have joined The People’s Caucus on AI, a citizen-led initiative to push for AI legislation in Colorado as the state debates the first comprehensive statewide regulations on AI in the country. So far, we have helped send over 18,000 letters to representatives in support of AI regulation, and we have been busy planning town halls and events throughout the month as deliberations are made in the state senate. Last week, we held a packed town hall on ICE, Palantir, and Flock with former state senator Tim Hernandez and David Seligman, candidate for attorney general in Colorado, who just filed a first-in-the-nation lawsuit against an AI company for discrimination under the Fair Credit Reporting Act.

Ziggurat, this publication, has been established as a nonprofit design and education studio to challenge the abuses of big tech and AI surveillance. Subscribe and stay tuned for more in-depth articles, new contributors, educational materials, and research collaborations with think tanks and advocates. We will adopt the subscriber-led, deep-dive approach that has fueled some of the best new media outlets today, such as Equator Mag and 404 Media.

Against Machines, our movement to challenge tech-fascism and the exploitation of people by big tech and AI, is growing. Over the past few months, we have been hosting creative showcases, literary exchanges, and town halls with local politicians — building offline community and literacy tech and civil rights issues. This month, we will be campaigning for People’s Caucus on AI in support of the ACLU as they push to strengthen AI regulations in Colorado at a pivotal time.

That brings us to Offline Underground, our upcoming physical-only magazine, calendar, and project to build an offline network of organizations and individuals challenging big tech. We aim to connect intersectional elements of the tech resistance from across political divides: climate activists, union organizers, veterans, artists, luddites, hacktivists, concerned parents and teachers — or anyone who is simply fed up with giving their tax money to surveillance tech billionaires. Offline Underground will also share the ideas of Brooklyn’s Strother School of Radical Attention, and join the national attention activism moment.

You can get a copy of the calendar and magazine FOR FREE at any of our Against Machines events and town halls, and receive it in your physical inbox with a paid subscription to Ziggurat. Subscribers will start receiving their first copies next week.

We are currently facing opposition with hundreds of millions of dollars in lobbying money, and our message runs contrary to that of dangerous and litigious people. Our operations are entirely reader funded, so please consider supporting us with a subscription or through a custom donation via our “Founder” subscription tier.

The best to help us today, however, is simply by sharing this newsletter far and wide and helping us grow our community.

We are starting Offline Underground Denver and New York. Contact hi@zig.art if you want to bring any of these initiatives to your city.

In related but separate news, I’m happy to announce that I’ve joined PauseAI —an international movement supporting AI legislation — as Denver Group Lead.

Interested in organizing? Send me a message.